Cut your AI spend by 50% at scale.

Guaranteed.

Optimize your inference.

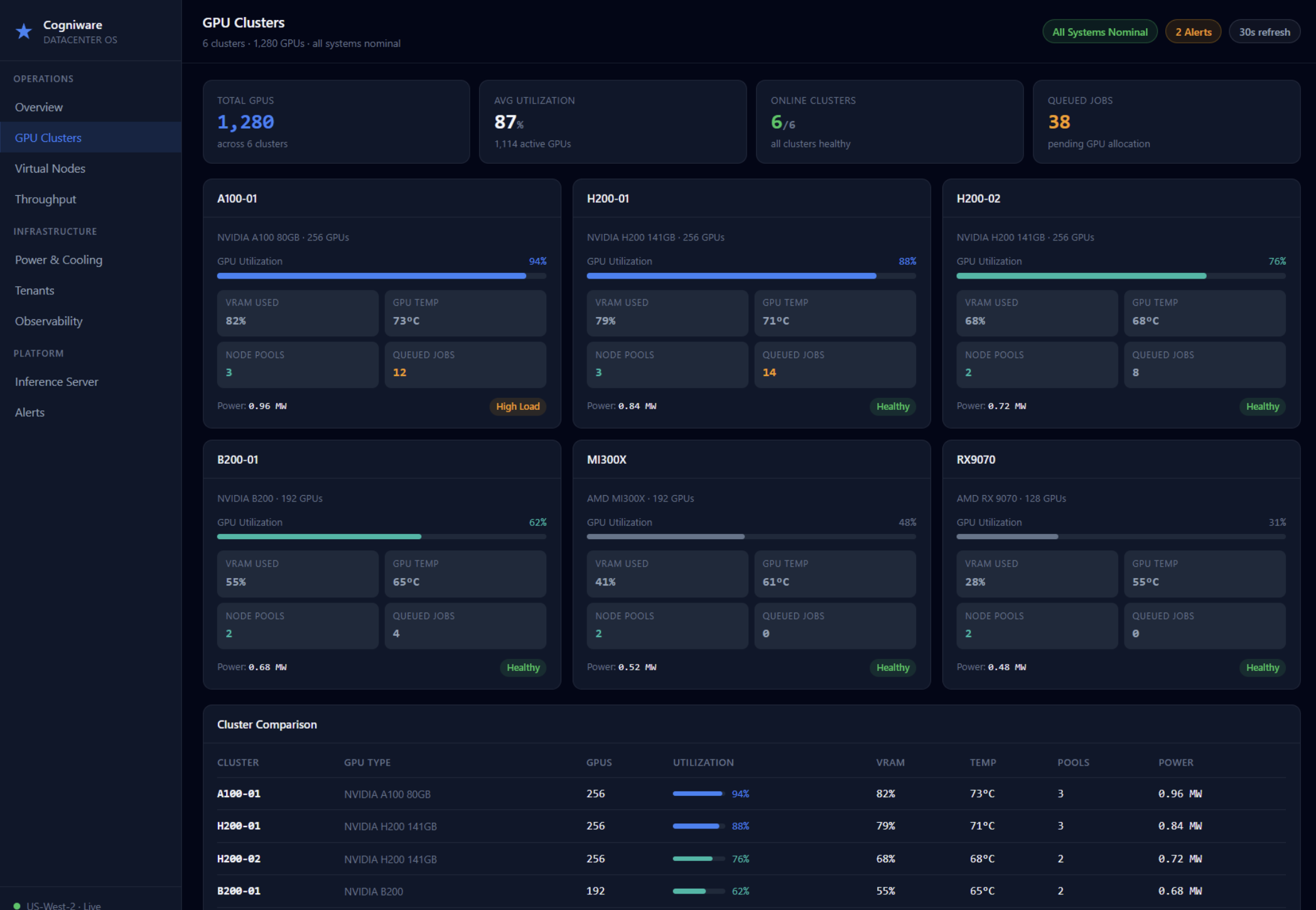

Cogniware software and services combine to enable you to maximize your AI infrastructure investments – delivering the most cost-effective, high performance inference at scale.

Inference stack optimization services

Neocloud data center design services

Middleware that makes AI more efficient

Maximize the impact of every GPU.

Patent-pending Cogniware technology helps enterprises cut the cost and complexity of generative AI by making inference more efficient, scalable, accurate, and hardware-flexible.

Cogniware middleware optimizes how GenAI systems use compute resources, enabling organizations to run multiple LLMs on a single device, improve hardware utilization, and reduce infrastructure costs by up to 70%.

Unique dual-reasoning, multi-model inference improves accuracy, reduces hallucinations, and increases efficiency via intelligent model routing and orchestration.

The platform supports deployment across NVIDIA, Intel, AMD, and other architectures, helping organizations avoid vendor lock-in while scaling AI workloads more cost-effectively.

Optimize your inference stack from models to megawatts.

Our strategic consulting services to help enterprises design, optimize, and scale high-performance generative and agentic AI systems so they deliver better economics, performance, and business impact.

We maximize your infrastructure investment. Benchmark current inference performance and implement a range of performance enhancing techniques, from cache optimization to model routing and orchestration.

We help you build smarter. Plan flexible, high performance architectures from scratch, focused on delivering scale, throughput, and resiliency – while maintaining flexibility to change models and vendors without replatforming.

Engineer for high density AI compute.

We design AI-native NeoCloud data centers that deliver the density, resiliency, performance, and flexibility required to run modern AI workloads at scale.

Our infrastructure architecture is purpose-built for high-density AI compute, combining advanced power engineering, progressive liquid-cooling readiness, high-throughput storage, tenant isolation, and expansion capacity.

Our network and accelerator strategy supports large-scale training and low-latency inference with non-blocking 800G fabric, RDMA/RoCEv2 capability, and flexible support for NVIDIA, AMD, and Intel platforms.

From sovereign AI environments to commissioning and operations, we support the full lifecycle required to deploy secure, resilient, production-ready AI infrastructure.

Our impact

-

We optimize compute utilization for AI workloads

-

We cut down on power needed for compute and cooling

-

We reduce the need to build additional data center facilities